I recently wrote a 80-page guide to how to get a programming job without a degree, curated from my experience helping students do just that at Springboard, a leading data science bootcamp. This excerpt is a part where I focus on an overview of the R programming language.

Description: R is an open-source programming language most often used by statisticians, data scientists, and academics who will use it to explore large data sets and distill insights from it. It offers multiple libraries that are useful for data processing tasks. Developed by John Chambers and other colleagues at Bell Laboratories, it is a refined version of its precursor language S.

It has strong libraries for data visualization, time series analysis and a variety of statistical analysis tasks.

It’s free software that runs on a variety of operating systems, from UNIX to Windows to OSX. It runs on the open source license of the GNU general public license and is thus free to use and adapt.

Salary: Median salaries for R users tend to vary, with the main split being the difference between data analysts who use R to query existing data pipelines and data scientists who build those data pipelines and train different models programmatically on top of larger data sets. The difference can be stark, with around a $90,000 median salary for data scientists who use R, vs about a $60,000 median salary for data analysts who use R.

Uses: R is often used to analyze datasets, especially in an academic context. Most frameworks that have evolved around R focus on different methods of data processing. The ggplot family of libraries has been widely recognized as some of the top programming modules for data visualization.

Differentiation: R is often compared to Python when it comes to data analysis and data science tasks. Its strong data visualization and manipulation libraries along with its data analysis-focused community help make it a strong contender for any data transformation, analysis, and visualization tasks.

Most Popular Github Projects:

1- Mal

Mal is a Clojure inspired lisp interpreter which can be implemented in the R programming language. With 4,500 stars, Mal requires one of the lowest amount of stars to qualify for the top repository of a programming language. It speaks to the fact that most of the open-source work done on the R programming language resides outside of Github.

2- Prophet

Prophet is a library that is able to do rich time series analysis by adjusting forecasts to account for seasonal and non-linear trends. It was created by Facebook and forms a part of the strong corpus of data analysis frameworks and libraries that exist for the R programming language.

3- ggplot2

Ggplot2 is a data visualization library for R that is based on the Grammar of Graphics. It is a library often used by data analysts and data scientists to display their results in charts, heatmaps, and more.

4- H2o-3

H2o-3 is the open source machine learning library for the R programming language, similar to scikit-learn for Python. It allows people using the R programming language to run deep learning and other machine learning techniques on their data, an essential utility in an era where data is not nearly as useful without machine learning techniques.

5- Shiny

Shiny is an easy web application framework for R that allows you to build interactive websites in a few lines of code without any JavaScript. It uses an intuitive UI (user interface) component based on Bootstrap. Shiny can take all of the guesswork out of building something for the web with the R programming language.

Example Sites: There are not many websites built with R, which is used more for data analysis tasks and projects that are internal to one computer. However, you can build things with R Markdown and build different webpages. You might also use a web development framework such as Shiny if you wanted to create simple interactive web applications around your data.

Frameworks: The awesome repository comes up again with a great list of different R packages and frameworks you can use. A few that are worth mentioning are packages such as dplyr that help you assemble data in an intuitive tabular fashion, ggplot2 to help with data visualization and plotly to help with interactive web displays of R analysis. R libraries and frameworks are some of the most robust for doing ad hoc data analysis and displaying the results in a variety of formats.

Learning Path: This article helps frame the resources you need to learn R, and how you should learn it, starting from syntax and going to specific packages. It makes for a great introduction to the field, even if you’re an absolute beginner. If you want to apply R to data science projects and different data analysis tasks, Datacamp will help you learn the skills and mentality you need to do just that — you’ll learn everything from machine learning practices with R to how to do proper data visualization of the results.

Resources: R-bloggers is a large community of R practitioners and writers who aim to share knowledge about R with each other. This list of 60+ resources on R can be used in case you ever get lost trying to learn R.

I hope this is helpful for you! Want more material like this? Check out my guide to how to get a programming job without a degree.

Category: R

R as learning tool: solving integrals

Integrals are so easy only math teachers could make them difficult.When I was in high school I really disliked math and, with hindsight, I would say it was just because of the the prehistoric teaching tools (when I saw this video I thought I’m not alone). I strongly believe that interaction CAUSES learning (I’m using “causes” here on purpose being quite aware of the difference between correlation and causation), practice should come before theory and imagination is not a skill you, as a teacher, could assume in your students. Here follows a short and simple practical explanation of integrals. The only math-thing I will write here is the following: f(x) = x + 7. From now on everything will be coded in R. So, first of all, what is a function? Instead of using the complex math philosophy let’s just look at it with a programming eye: it is a tool that takes something in input and returns something else as output. For example, if we use the previous tool with 2 as an input we get a 9. Easy peasy. Let’s look at the code:

# here we create the tool (called "f")

# it just takes some inputs and add it to 7

f <- function(x){x+7}

# if we apply it to 2 it returns a 9

f(2)

9

Then the second question comes by itself. What is an integral? Even simpler, it is just the sum of this tool applied to many inputs in a range. Quite complicated, let’s make it simpler with code:

# first we create the range of inputs # basically x values go from 4 to 6 # with a very very small step (0.01) # seq stands for sequence(start, end, step)

x <- seq(4, 6, 0.01) x 4.00 4.01 4.02 4.03 4.04 4.05 4.06 4.07... x[1] 4 x[2] 4.01As you see, x has many values and each of them is indexed so it’s easy to find, e.g. the first element is 4 (x[1]). Now that we have many x values (201) within the interval from 4 to 6, we compute the integral.

# since we said that the integral is # just a sum, let's call it IntSum and # set it to the start value of 0 # in this way it will work as an accumulator

IntSum = 0Differently from the theory in which the calculation of the integral produces a new non-sense formula (just kidding, but this seems to be what math teachers are supposed to explain), the integral does produce an output, i.e. a number. We find this number by summing the output of each input value we get from the tool (e.g. 4+7, 4.01+7, 4.02+7, etc) multiplied by the step between one value and the following (e.g. 4.01-4, 4.02-4.01, 4.03-4.02, etc). Let’s clarify this, look down here:

# for each value of x

for(i in 2:201){

# we do a very simple thing:

# we cumulate with a sum

# the output value of the function f

# multiplied by each steps difference

IntSum = IntSum + f(x[i])*(x[i]-x[i-1])

# So for example,

# with the first and second x values the numbers will be:

#0.1101 = 0 + (4.01 + 7)*(4.01 - 4)

# with the second and third:

#0.2203 = 0.1101 + (4.02 + 7)*(4.02 - 4.01)

# with the third and fourth:

#0.3306 = 0.2203 + (4.03 + 7)*(4.03 - 4.02)

# and so on... with the sum (integral) growing and growing

# up until the last value

}

IntSum

24.01

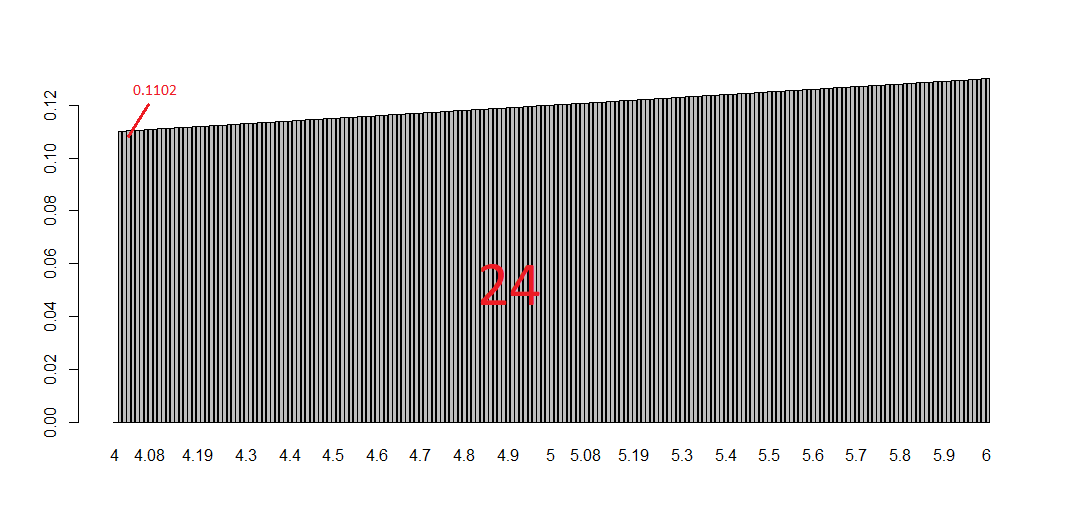

Done! We have the integral but let’s have a look to the visualization of this because it can be represented and made crystal clear. Let’s add a short line of code to serve the purpose of saving the single number added to the sum each time. The reason why we decide to call it “bin” instead of, for example, “many_sum” will be clear in a moment.

# we need to store 201 calculation and we # simply do what we did for IntSum but 201 times bin = rep(0, 201) bin 0 0 0 0 0 0 0 0 0 0 0 0 ...Basically, we created a sort of memory to host each of the calculation as you see down here:

for (i in 2:201){

# the sum as earlier

IntSum = IntSum + f(x[i])*(x[i]-x[i-1])

# overwrite each zero with each number

bin[i] = f(x[i])*(x[i]-x[i-1])

}

IntSum

24.01

bin

0.0000 0.1101 0.1102 0.1103 0.1104 0.1105 ..

sum(bin)

24.01

Now if you look at the plot below you get the whole story: each bin is a tiny bar with a very small area and is the smallest part of the integral (i.e. the sum of all the bins).

# plotting them all barplot(bin, names.arg=x)This tells you a lot about the purpose of integral and the possibility of calculating areas of curvy surfaces. To have an idea of this just change the function f with, let’s say, sin(x) or log(x). What is happening? And what if you increase/decrease the number of bins? Have fun replicating the code changing some numbers and functions. Integrals should be clearer in the end. That’s all folks! #R #rstats #maRche #Rbloggers

This post is also shared in https://www.linkedin.com/

Using Shiny Dashboards for Financial Analysis

For some time now, I have been trading traditional assets—mostly U.S. equities. About a year ago, I jumped into the cryptocurrency markets to try my hand there as well. In my time in investor Telegram chats and subreddits, I often saw people arguing over which investments had performed better over time, but the reality was that most such statements were anecdotal, and thus unfalsifiable.

Given the paucity of cryptocurrency data available in an easily accessible format, it was quite difficult to say for certain that a particular investment was a good one relative to some alternative, unless you were very familiar with a handful of APIs. Even then, assuming you knew how to get daily OHLC data for a crypto-asset like Bitcoin, in order to compare it to some other asset—say Amazon stock—you would have to eyeball trends from a website like Yahoo finance or scrape that data separately and build your own visualizations and metrics. In short, historical asset performance comparisons in the crypto space were difficult to conduct for all but the most technically savvy individuals, so I set out to build a tool that would remedy this, and the Financial Asset Comparison Tool was born.

In this post, I aim to describe a few key components of the dashboard, and also call out lessons learned from the process of iterating on the tool along the way. Prior to proceeding, I highly recommend that you read the app’s README and take a look at the UI and code base itself, as this will provide the context necessary to understanding the rest of the commentary below.

I’ll start by delving into a few principles that I find to be to key when designing analytic dashboards, drawing on the asset comparison dashboard as my exemplar, and will end with some discussion of the relative utility of a few packages integral to the app. Overall, my goal is not to focus on the tool that I built alone, but to highlight a few main best practices when it comes to building dashboards for any analysis.

Build the app around the story, not the other way around.

Before ever writing a single line of code for an analytic app, I find that it is absolutely imperative to have a clear vision of the story that the tool must tell. I do not mean by this that you should already have conclusions about your data that you will then force the app into telling, but rather, that you must know how you want your user to interact with the app in order glean useful information.

In the case of my asset comparison tool, I wanted to serve multiple audiences—everyone from a casual trader who just wanted to see which investment produced the greatest net profit over a period of time, to a more experience trader, who had more nuanced questions about risk-adjusted return on investment given varying discount rates. The trick is thus building the app in such a way that serves all possible audiences without hindering any one type of user in particular.

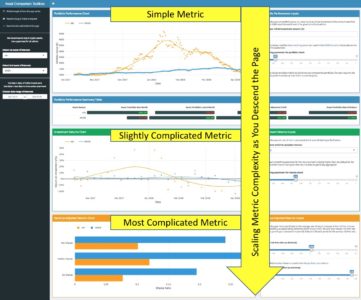

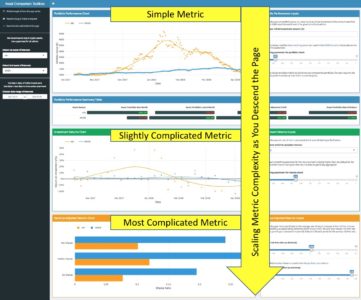

The way I designed my app to meet this need was to build the UI such that as you descend the various sections vertically, the metrics displayed scale in complexity. My reasoning for this becomes apparent when you consider the two extremes in terms of users—the most basic vs. the most advanced trader.

The most basic user will care only about the assets of interest, the time period they want to examine, and how their initial investment performed over time. As such, they will start with the sidebar, input their assets and time frame of choice, and then use the top right-most input box to modulate their initial investment amount (although some may choose to stick with the default value here). They will then see the first chart change to reflect their choices, and they will see, both visually, and via the summary table below, which asset performed better.

The experienced trader, on the other hand, will start off exactly as the novice did, by choosing assets of interest, a time frame of reference, and an initial investment amount. They may then choose to modulate the LOESS parameters as they see fit, descending the page, looking over the simple returns section, perhaps stopping to make changes to the corresponding inputs there, and finally ending at the bottom of the page—at the Sharpe Ratio visualizations. Here they will likely spend more time—playing around with the time period over which to measure returns and changing the risk-free rate to align with their own personal macroeconomic assumptions.

The point of these two examples is to illustrate that the app by dint of its structure alone guides the user through the analytic story in a waterfall-like manner—building from simple portfolio performance, to relative performance, to the most complicated metrics for risk-adjusted returns. This keeps the novice trader from being overwhelmed or confused, and also allows the most experienced user to follow the same line of thought that they would anyway when comparing assets, while following a logical progression of complexity, as shown via the screenshot below.

Once you think you have a structure that guides all users through the story you want them to experience, test it by asking yourself if the app flows in such a way that you could pose and answer a logical series of questions as you navigate the app without any gaps in cohesion. In the case of this app, the questions that the UI answers as you descend are as follows:

Thus, when you string these questions together, you can make statements of the type: “Asset X seemed to outperform Asset Y in terms of absolute profit, and this trend held true as well when it comes to simple return on investment, over varying time frames. That said, when you take into account the variance inherent to Asset X, it seems that Asset Y may have been the best choice, as the excess downside risk associated with Asset X outweighs its excess net profitability.

Too many cooks in the kitchen—the case for a functional approach to app-building.

While the design of the UI of any analytic app is of great importance, it’s important to not forget that the code base itself should also be well-designed; a fully-functional app from the user’s perspective can still be a terrible app to work with if the code is a jumbled, incomprehensible mess. A poorly designed code base makes QC a tiresome, aggravating process, and knowledge sharing all but impossible.

For this reason, I find that sourcing a separate R script file containing all analytic functions necessitated by the app is the best way to go, as done below (you can see Functions.R at my repo here).

Not only does this allow for a more comprehensible and less-cluttered App.R, but it also drastically improves testability and reusability of the code. Consider the example function below, used to create the portfolio performance chart in the app (first box displayed in the UI, center middle).

Writing this function in the sourced Functions.R file instead of directly within the App.R allows for the developer to first test the function itself with fake data—i.e. data not gleaned from the reactive inputs. Once it has been tested in this way, it can be integrated in the app.R on the server side as shown below, with very little code.

This process allows for better error-identification and troubleshooting. If, for example, you want to change the work accomplished by the analytic function in some way, you can make the changes necessary to the code, and if the app fails to produce the desired outcome, you simply restart the chain: first you test the function in a vacuum outside of the app, and if it runs fine there, then you know that you have a problem with the way the reactive inputs are integrating with the function itself. This is a huge time saver when debugging.

Lastly, this allows for ease of reproducibility and hand-offs. If, say, one of your functions simply takes in a dataset and produces a chart of some sort, then it can be easily copied from the Functions.R and reused elsewhere. I have done this too many times to count, ripping code from project and, with a few alterations, instantly applying it in other contexts. This is easy to do if the functions are written in a manner not dependent on a particular Shiny reactive structure. For all these reasons, it makes sense in most cases to keep the code for the app UI and inputs cleanly separated from the analytic functions via a sourced R script.

Dashboard documentation—both a story and a manual, not one or the other.

When building an app for a customer at work, I never simply write an email with a link in it and write “here you go!” That will result in, at best, a steep learning curve, and at worst, an app used in an unintended way, resulting in user frustration or incorrect results. I always meet with the customer, explain the purpose and functionalities of the tool, walk through the app live, take feedback, and integrate any key takeaways into further iterations.

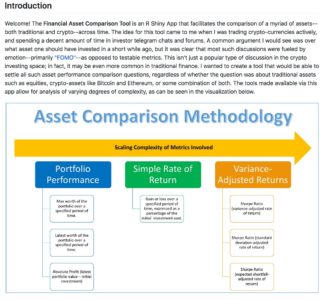

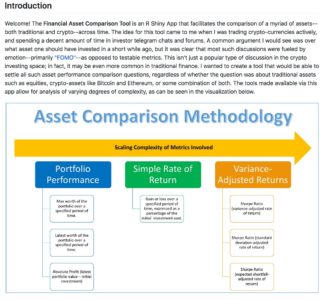

Even if you are just planning on writing some code to put up on GitHub, you should still consider all of these steps when working on the documentation for your app. In most cases, the README is the epicenter of your documentation—the README is your meeting with the customer. As you saw when reading the README for the Asset Comparison Tool, I always start my READMEs with a high-level introduction to the purpose of the app—hopefully written or supplemented with visuals (as seen below) that are easy to understand and will capture the attention of browsing passers-by.

After this introduction, the rest of the potential sections to include can vary greatly from app-to-app. In some cases apps are meant to answer one particular question, and might have a variety of filters or supplemental functionalities—one such example can be found here. As can be seen, in that README, I spend a great deal of time on the methodology after making the overall purpose clear, calling out additional options along the way. In the case of the README for the Asset Comparison Tool, however, the story is a bit different. Given that there are many questions that the app seeks to answer, it makes sense to answer each in turn, writing the README in such a way that its progression mirrors the logical flow of the progression intended for the app’s user.

One should of course not neglect to cover necessary technical information in the README as well. Anything that is not immediately clear from using the app should be clarified in the README—from calculation details to the source of your data, etc. Finally, don’t neglect the iterative component! Mention how you want to interact with prospective users and collaborators in your documentation. For example, I normally call out how I would like people to use the Issues tab on GitHub to propose any changes or additions to the documentation, or the app in general. In short, your documentation must include both the story you want to tell, and a manual for your audience to follow.

Why Shiny Dashboard?

One of the first things you will notice about the app.R code is that the entire thing is built using Shiny Dashboard as its skeleton. There are a two main reasons for this, which I will touch on in turn.

Shiny Dashboard provides the biggest bang for your buck in terms of how much UI complexity and customizability you get out of just a small amount of code.

I can think of few cases where any analyst or developer would prefer longer, more verbose code to a shorter, succinct solution. That said, Shiny Dashboard’s simplicity when it comes to UI manipulation and customization is not just helpful because it saves you time as a coder, but because it is intuitive from the perspective of your audience.

Most of the folks that use the tools I have built to shed insight into economic questions don’t know how to code in R or Python, but they can, with a little help from extensive commenting and detailed documentation, understand the broad structure of an app coded in Shiny Dashboard format. This is, I believe, largely a function of two features of Shiny Dashboard: the colloquial-English-like syntax of the code for UI elements, and the lack of the necessity for in-line or external CSS.

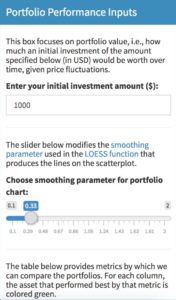

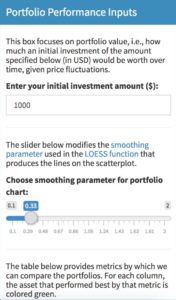

As you can see from the example below, Shiny Dashboard’s system of “boxes” for UI building is easy to follow. Users can see a box in the app and easily tie that back to a particular box in the UI code.

Here is the box as visible to the user:

And here is the code that produces the box:

Secondly, and somewhat related to the first point, with Shiny Dashboard, much of the coloring and overall UI design comes pre-made via dashboard-wide “skins”, and box-specific “statuses.”

This is great if you are okay sacrificing a bit of control for a significant reduction in code complexity. In my experience dealing with non-coding-proficient audiences, I find that in-line CSS or complicated external CSS makes folks far more uncomfortable with the code in general. Anything you can do to reduce this anxiety and make those using your tools feel as though they understand them better is a good thing, and Shiny Dashboard makes that easier.

Shiny Dashboard’s combination of sidebar and boxes makes for easy and efficient data processing when your app has a waterfall-like analytic structure.

Having written versions of this app both in base Shiny and using Shiny Dashboard, the number one reason I chose Shiny Dashboard was the fact that the analytic questions I sought to solve followed a waterfall-like structure, as explained in the previous section. This works perfectly well with Shiny Dashboard’s combination of sidebar input controls and inputs within UI boxes themselves.

The inputs of primordial importance to all users are included in the sidebar UI: the two assets to analyze, and the date range over which to compare their performance. These are the only inputs that all users, regardless of experience or intent, must absolutely use, and when they are changed, all views in the dashboard will be affected. All other inputs are stored in the UI Boxes adjacent to the views that they modulate. This makes for a much more intuitive and fluid user experience, as once the initial sidebar inputs have been modulated, the sidebar can be hidden, as all other non-hidden inputs affect only the visualizations to which they are adjacent.

This waterfall-like structure also makes for more efficient reactive processes on the Shiny back-end. The inputs on the sidebar are parameters that, when changed, force the main reactive function that creates that primary dataset to fire, thus recreating the base dataset (as can be seen in the code for that base datasets creation below).

Each of the visualizations are then produced via their own separate reactive functions, each of which takes as an input the main reactive (as shown below). This makes it so that whenever the sidebar inputs are changed, all reactives fire and all visualizations are updated; however, if all that is changed is a single loess smoothing parameter input, only the reactive used in the creation of that particular parameter-dependent visualization fires, which makes for great computational efficiency.

Why Plotly?

Plotly vs. ggplot is always a fun subject for discussion among folks who build visualizations in R. Sometimes I feel like such discussions just devolve into the same type of argument as R vs. Python for data science (my answer to this question being just pick one and learn it well), but over time I have found that there are actually some circumstances where the plotly vs. ggplot debate can yield cleaner answers.

In particular, I have found in working on this particular type of analytic app that there are two areas where plotly has a bit of an advantage: clickable interactivity, and wide data.

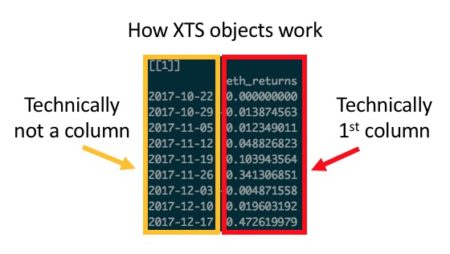

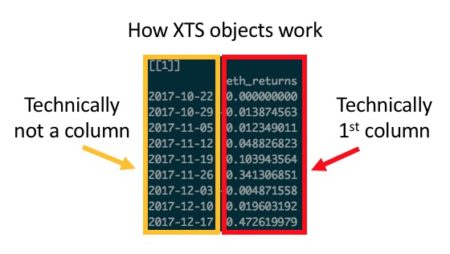

Those familiar with ggplot will know that every good ggplot begins with long data. It is possible, via some functions, to transform wide data into a long format, but that transformation can sometimes be problematic. While there are essentially no circumstances in which it is impossible to transform wide data into long format, there are a handful of cases where it is excessively cumbersome: namely, when dealing with indexed xts objects (as shown below) or time series / OHLC-styled data.

In these cases—either due to the sometimes-awkward way in which you have to handle rowname indexes in xts, or the time and code complexity saved by not having to transform every dataset into long format—plotly offers efficiency gains relative to ggplot.

The aforementioned efficiency gains are a reason to choose plotly in some cases because it makes the life of the coder easier, but there are also reasons why it sometimes make the life of the user easier as well.

If one of the primary utilities of a visualization is to allow the user the ability to seamlessly and intuitively zoom in on, select, or filter the data displayed, particularly in the context of a Shiny App, then plotly should be strongly considered. Sure, ggplotly wrappers can be used to make a ggplot interactive, but with an added layer of abstraction comes an added layer of possible errors. While in most cases a ggplotly wrapper should work seamlessly, I have found that, particularly in cases where auto-sizing and margin size specification is key, ggplotly can require a great deal of added code in order to work correctly in a Shiny context.

In summary, when considering when to start with plotly vs. when to start with ggplot, I find one question to be particularly helpful: what do I value most—visual complexity and/or customization, or interactive versatility and/or preserving wide data?

If I choose the former, then ggplot is what I need; otherwise, I go with plotly. More often than not I find that ggplot emerges victorious, but even if you disagree with me in my decision-making calculus, I think it is helpful to at least think through what your personal calculus is. This will save you time when coding, as instead of playing around with various types of viz, you can simply pose the question(s) behind your calculus and know quickly what solution best fits your problem.

Why Formattable?

The case for formattable is, in my opinion, a much easier case to make than arguing for plotly vs. ggplot. The only question worth asking when deciding on whether or not to use formattable in your app is: do I want my table to tell a quick story via added visual complexity within the same cell that contains my data, or is a reference table all I am looking for? If you chose the former, formattable is probably a good way to go. You’ll notice as well that the case for formattable is very specific–in most cases there is likely a simpler solution via the DT or kableExtra packages.

The one downside that I have encountered in dealing with formattable code is the amount of code necessary to generate even moderately complicated tables. That said, this problem is easily remedied via a quick function that we can use to kill most of the duplicative coding, as seen in the example below.

First, here is the long form version:

This code can easily be shortened via the integration of a custom function, as shown below.

As can be seen, formattable allows for a great deal of added complexity in crafting your table—complexity that may not be suited for all apps. That said, if you do want to quickly draw a user’s attention to something in a table, formattable is a great solution, and most of the details of the code can be greatly simplified via a function, as shown.

Conclusions:

That was a lot—I know—but I hope that from this commentary and my exemplar of the Asset Comparison Tool more generally has helped to inform your understanding of how dashboards can serve as a helpful analytic tool. Furthermore, I hope to have prompted some thoughts as to the best practices to be followed when building such a tool. I’ll end with a quick tl;dr:

Thanks for reading!

Given the paucity of cryptocurrency data available in an easily accessible format, it was quite difficult to say for certain that a particular investment was a good one relative to some alternative, unless you were very familiar with a handful of APIs. Even then, assuming you knew how to get daily OHLC data for a crypto-asset like Bitcoin, in order to compare it to some other asset—say Amazon stock—you would have to eyeball trends from a website like Yahoo finance or scrape that data separately and build your own visualizations and metrics. In short, historical asset performance comparisons in the crypto space were difficult to conduct for all but the most technically savvy individuals, so I set out to build a tool that would remedy this, and the Financial Asset Comparison Tool was born.

In this post, I aim to describe a few key components of the dashboard, and also call out lessons learned from the process of iterating on the tool along the way. Prior to proceeding, I highly recommend that you read the app’s README and take a look at the UI and code base itself, as this will provide the context necessary to understanding the rest of the commentary below.

I’ll start by delving into a few principles that I find to be to key when designing analytic dashboards, drawing on the asset comparison dashboard as my exemplar, and will end with some discussion of the relative utility of a few packages integral to the app. Overall, my goal is not to focus on the tool that I built alone, but to highlight a few main best practices when it comes to building dashboards for any analysis.

Build the app around the story, not the other way around.

Before ever writing a single line of code for an analytic app, I find that it is absolutely imperative to have a clear vision of the story that the tool must tell. I do not mean by this that you should already have conclusions about your data that you will then force the app into telling, but rather, that you must know how you want your user to interact with the app in order glean useful information.

In the case of my asset comparison tool, I wanted to serve multiple audiences—everyone from a casual trader who just wanted to see which investment produced the greatest net profit over a period of time, to a more experience trader, who had more nuanced questions about risk-adjusted return on investment given varying discount rates. The trick is thus building the app in such a way that serves all possible audiences without hindering any one type of user in particular.

The way I designed my app to meet this need was to build the UI such that as you descend the various sections vertically, the metrics displayed scale in complexity. My reasoning for this becomes apparent when you consider the two extremes in terms of users—the most basic vs. the most advanced trader.

The most basic user will care only about the assets of interest, the time period they want to examine, and how their initial investment performed over time. As such, they will start with the sidebar, input their assets and time frame of choice, and then use the top right-most input box to modulate their initial investment amount (although some may choose to stick with the default value here). They will then see the first chart change to reflect their choices, and they will see, both visually, and via the summary table below, which asset performed better.

The experienced trader, on the other hand, will start off exactly as the novice did, by choosing assets of interest, a time frame of reference, and an initial investment amount. They may then choose to modulate the LOESS parameters as they see fit, descending the page, looking over the simple returns section, perhaps stopping to make changes to the corresponding inputs there, and finally ending at the bottom of the page—at the Sharpe Ratio visualizations. Here they will likely spend more time—playing around with the time period over which to measure returns and changing the risk-free rate to align with their own personal macroeconomic assumptions.

The point of these two examples is to illustrate that the app by dint of its structure alone guides the user through the analytic story in a waterfall-like manner—building from simple portfolio performance, to relative performance, to the most complicated metrics for risk-adjusted returns. This keeps the novice trader from being overwhelmed or confused, and also allows the most experienced user to follow the same line of thought that they would anyway when comparing assets, while following a logical progression of complexity, as shown via the screenshot below.

Once you think you have a structure that guides all users through the story you want them to experience, test it by asking yourself if the app flows in such a way that you could pose and answer a logical series of questions as you navigate the app without any gaps in cohesion. In the case of this app, the questions that the UI answers as you descend are as follows:

- How do these assets compare in terms of absolute profit?

- How do these assets compare in terms of simple return on investment?

- How do these assets compare in terms of variance-adjusted and/or risk-adjusted return on investment?

Thus, when you string these questions together, you can make statements of the type: “Asset X seemed to outperform Asset Y in terms of absolute profit, and this trend held true as well when it comes to simple return on investment, over varying time frames. That said, when you take into account the variance inherent to Asset X, it seems that Asset Y may have been the best choice, as the excess downside risk associated with Asset X outweighs its excess net profitability.

Too many cooks in the kitchen—the case for a functional approach to app-building.

While the design of the UI of any analytic app is of great importance, it’s important to not forget that the code base itself should also be well-designed; a fully-functional app from the user’s perspective can still be a terrible app to work with if the code is a jumbled, incomprehensible mess. A poorly designed code base makes QC a tiresome, aggravating process, and knowledge sharing all but impossible.

For this reason, I find that sourcing a separate R script file containing all analytic functions necessitated by the app is the best way to go, as done below (you can see Functions.R at my repo here).

# source the Functions.R file, where all analytic functions for the app are stored

source("Functions.R")

Not only does this allow for a more comprehensible and less-cluttered App.R, but it also drastically improves testability and reusability of the code. Consider the example function below, used to create the portfolio performance chart in the app (first box displayed in the UI, center middle).

build_portfolio_perf_chart <- function(data, port_loess_param = 0.33){

port_tbl <- data[,c(1,4:5)]

# grabbing the 2 asset names

asset_name1 <- sub('_.*', '', names(port_tbl)[2])

asset_name2 <- sub('_.*', '', names(port_tbl)[3])

# transforms dates into correct type so smoothing can be done

port_tbl[,1] <- as.Date(port_tbl[,1])

date_in_numeric_form <- as.numeric((port_tbl[,1]))

# assigning loess smoothing parameter

loess_span_parameter <- port_loess_param

# now building the plotly itself

port_perf_plot <- plot_ly(data = port_tbl, x = ~port_tbl[,1]) %>%

# asset 1 data plotted

add_markers(y =~port_tbl[,2],

marker = list(color = '#FC9C01'),

name = asset_name1,

showlegend = FALSE) %>%

add_lines(y = ~fitted(loess(port_tbl[,2] ~ date_in_numeric_form, span = loess_span_parameter)),

line = list(color = '#FC9C01'),

name = asset_name1,

showlegend = TRUE) %>%

# asset 2 data plotted

add_markers(y =~port_tbl[,3],

marker = list(color = '#3498DB'),

name = asset_name2,

showlegend = FALSE) %>%

add_lines(y = ~fitted(loess(port_tbl[,3] ~ date_in_numeric_form, span = loess_span_parameter)),

line = list(color = '#3498DB'),

name = asset_name2,

showlegend = TRUE) %>%

layout(

title = FALSE,

xaxis = list(type = "date",

title = "Date"),

yaxis = list(title = "Portfolio Value ($)"),

legend = list(orientation = 'h',

x = 0,

y = 1.15)) %>%

add_annotations(

x= 1,

y= 1.133,

xref = "paper",

yref = "paper",

text = "",

showarrow = F

)

return(port_perf_plot)

}

Writing this function in the sourced Functions.R file instead of directly within the App.R allows for the developer to first test the function itself with fake data—i.e. data not gleaned from the reactive inputs. Once it has been tested in this way, it can be integrated in the app.R on the server side as shown below, with very little code.

output$portfolio_perf_chart <-

debounce(

renderPlotly({

data <- react_base_data()

build_portfolio_perf_chart(data, port_loess_param = input$port_loess_param)

}),

millis = 2000) # sets wait time for debounce

This process allows for better error-identification and troubleshooting. If, for example, you want to change the work accomplished by the analytic function in some way, you can make the changes necessary to the code, and if the app fails to produce the desired outcome, you simply restart the chain: first you test the function in a vacuum outside of the app, and if it runs fine there, then you know that you have a problem with the way the reactive inputs are integrating with the function itself. This is a huge time saver when debugging.

Lastly, this allows for ease of reproducibility and hand-offs. If, say, one of your functions simply takes in a dataset and produces a chart of some sort, then it can be easily copied from the Functions.R and reused elsewhere. I have done this too many times to count, ripping code from project and, with a few alterations, instantly applying it in other contexts. This is easy to do if the functions are written in a manner not dependent on a particular Shiny reactive structure. For all these reasons, it makes sense in most cases to keep the code for the app UI and inputs cleanly separated from the analytic functions via a sourced R script.

Dashboard documentation—both a story and a manual, not one or the other.

When building an app for a customer at work, I never simply write an email with a link in it and write “here you go!” That will result in, at best, a steep learning curve, and at worst, an app used in an unintended way, resulting in user frustration or incorrect results. I always meet with the customer, explain the purpose and functionalities of the tool, walk through the app live, take feedback, and integrate any key takeaways into further iterations.

Even if you are just planning on writing some code to put up on GitHub, you should still consider all of these steps when working on the documentation for your app. In most cases, the README is the epicenter of your documentation—the README is your meeting with the customer. As you saw when reading the README for the Asset Comparison Tool, I always start my READMEs with a high-level introduction to the purpose of the app—hopefully written or supplemented with visuals (as seen below) that are easy to understand and will capture the attention of browsing passers-by.

After this introduction, the rest of the potential sections to include can vary greatly from app-to-app. In some cases apps are meant to answer one particular question, and might have a variety of filters or supplemental functionalities—one such example can be found here. As can be seen, in that README, I spend a great deal of time on the methodology after making the overall purpose clear, calling out additional options along the way. In the case of the README for the Asset Comparison Tool, however, the story is a bit different. Given that there are many questions that the app seeks to answer, it makes sense to answer each in turn, writing the README in such a way that its progression mirrors the logical flow of the progression intended for the app’s user.

One should of course not neglect to cover necessary technical information in the README as well. Anything that is not immediately clear from using the app should be clarified in the README—from calculation details to the source of your data, etc. Finally, don’t neglect the iterative component! Mention how you want to interact with prospective users and collaborators in your documentation. For example, I normally call out how I would like people to use the Issues tab on GitHub to propose any changes or additions to the documentation, or the app in general. In short, your documentation must include both the story you want to tell, and a manual for your audience to follow.

Why Shiny Dashboard?

One of the first things you will notice about the app.R code is that the entire thing is built using Shiny Dashboard as its skeleton. There are a two main reasons for this, which I will touch on in turn.

Shiny Dashboard provides the biggest bang for your buck in terms of how much UI complexity and customizability you get out of just a small amount of code.

I can think of few cases where any analyst or developer would prefer longer, more verbose code to a shorter, succinct solution. That said, Shiny Dashboard’s simplicity when it comes to UI manipulation and customization is not just helpful because it saves you time as a coder, but because it is intuitive from the perspective of your audience.

Most of the folks that use the tools I have built to shed insight into economic questions don’t know how to code in R or Python, but they can, with a little help from extensive commenting and detailed documentation, understand the broad structure of an app coded in Shiny Dashboard format. This is, I believe, largely a function of two features of Shiny Dashboard: the colloquial-English-like syntax of the code for UI elements, and the lack of the necessity for in-line or external CSS.

As you can see from the example below, Shiny Dashboard’s system of “boxes” for UI building is easy to follow. Users can see a box in the app and easily tie that back to a particular box in the UI code.

Here is the box as visible to the user:

And here is the code that produces the box:

box(

title = "Portfolio Performance Inputs",

status= "primary",

solidHeader = TRUE,

h5("This box focuses on portfolio value, i.e., how much an initial investment of the amount specified below (in USD) would be worth over time, given price fluctuations."),

textInput(

inputId = "initial_investment",

label = "Enter your initial investment amount ($):",

value = "1000"),

hr(),

h5("The slider below modifies the", a(href = "https://stats.stackexchange.com/questions/2002/how-do-i-decide-what-span-to-use-in-loess-regression-in-r", "smoothing parameter"), "used in the", a(href = "https://en.wikipedia.org/wiki/Local_regression", "LOESS function"), "that produces the lines on the scatterplot."),

sliderInput(

inputId = "port_loess_param",

label = "Smoothing parameter for portfolio chart:",

min = 0.1,

max = 2,

value = .33,

step = 0.01,

animate = FALSE

),

hr(),

h5("The table below provides metrics by which we can compare the portfolios. For each column, the asset that performed best by that metric is colored green."),

height = 500,

width = 4

)

Secondly, and somewhat related to the first point, with Shiny Dashboard, much of the coloring and overall UI design comes pre-made via dashboard-wide “skins”, and box-specific “statuses.”

This is great if you are okay sacrificing a bit of control for a significant reduction in code complexity. In my experience dealing with non-coding-proficient audiences, I find that in-line CSS or complicated external CSS makes folks far more uncomfortable with the code in general. Anything you can do to reduce this anxiety and make those using your tools feel as though they understand them better is a good thing, and Shiny Dashboard makes that easier.

Shiny Dashboard’s combination of sidebar and boxes makes for easy and efficient data processing when your app has a waterfall-like analytic structure.

Having written versions of this app both in base Shiny and using Shiny Dashboard, the number one reason I chose Shiny Dashboard was the fact that the analytic questions I sought to solve followed a waterfall-like structure, as explained in the previous section. This works perfectly well with Shiny Dashboard’s combination of sidebar input controls and inputs within UI boxes themselves.

The inputs of primordial importance to all users are included in the sidebar UI: the two assets to analyze, and the date range over which to compare their performance. These are the only inputs that all users, regardless of experience or intent, must absolutely use, and when they are changed, all views in the dashboard will be affected. All other inputs are stored in the UI Boxes adjacent to the views that they modulate. This makes for a much more intuitive and fluid user experience, as once the initial sidebar inputs have been modulated, the sidebar can be hidden, as all other non-hidden inputs affect only the visualizations to which they are adjacent.

This waterfall-like structure also makes for more efficient reactive processes on the Shiny back-end. The inputs on the sidebar are parameters that, when changed, force the main reactive function that creates that primary dataset to fire, thus recreating the base dataset (as can be seen in the code for that base datasets creation below).

# utility functions to be used within the server; this enables us to use a textinput for our portfolio values

exists_as_number <- function(item) {

!is.null(item) && !is.na(item) && is.numeric(item)

}

# data-creation reactives (i.e. everything that doesn't directly feed an output)

# first is the main data pull which will fire whenever the primary inputs (asset_1a, asset_2a, initial_investment, or port_dates1a change)

react_base_data <- reactive({

if (exists_as_number(as.numeric(input$initial_investment)) == TRUE) {

# creates the dataset to feed the viz

return(

get_pair_data(

asset_1 = input$asset_1a,

asset_2 = input$asset_2a,

port_start_date = input$port_dates1a[1],

port_end_date = input$port_dates1a[2],

initial_investment = (as.numeric(input$initial_investment))

)

)

} else {

return(

get_pair_data(

asset_1 = input$asset_1a,

asset_2 = input$asset_2a,

port_start_date = input$port_dates1a[1],

port_end_date = input$port_dates1a[2],

initial_investment = (0)

)

)

}

})

Each of the visualizations are then produced via their own separate reactive functions, each of which takes as an input the main reactive (as shown below). This makes it so that whenever the sidebar inputs are changed, all reactives fire and all visualizations are updated; however, if all that is changed is a single loess smoothing parameter input, only the reactive used in the creation of that particular parameter-dependent visualization fires, which makes for great computational efficiency.

# Now the reactives for the actual visualizations

output$portfolio_perf_chart <-

debounce(

renderPlotly({

data <- react_base_data()

build_portfolio_perf_chart(data, port_loess_param = input$port_loess_param)

}),

millis = 2000) # sets wait time for debounce

Why Plotly?

Plotly vs. ggplot is always a fun subject for discussion among folks who build visualizations in R. Sometimes I feel like such discussions just devolve into the same type of argument as R vs. Python for data science (my answer to this question being just pick one and learn it well), but over time I have found that there are actually some circumstances where the plotly vs. ggplot debate can yield cleaner answers.

In particular, I have found in working on this particular type of analytic app that there are two areas where plotly has a bit of an advantage: clickable interactivity, and wide data.

Those familiar with ggplot will know that every good ggplot begins with long data. It is possible, via some functions, to transform wide data into a long format, but that transformation can sometimes be problematic. While there are essentially no circumstances in which it is impossible to transform wide data into long format, there are a handful of cases where it is excessively cumbersome: namely, when dealing with indexed xts objects (as shown below) or time series / OHLC-styled data.

In these cases—either due to the sometimes-awkward way in which you have to handle rowname indexes in xts, or the time and code complexity saved by not having to transform every dataset into long format—plotly offers efficiency gains relative to ggplot.

The aforementioned efficiency gains are a reason to choose plotly in some cases because it makes the life of the coder easier, but there are also reasons why it sometimes make the life of the user easier as well.

If one of the primary utilities of a visualization is to allow the user the ability to seamlessly and intuitively zoom in on, select, or filter the data displayed, particularly in the context of a Shiny App, then plotly should be strongly considered. Sure, ggplotly wrappers can be used to make a ggplot interactive, but with an added layer of abstraction comes an added layer of possible errors. While in most cases a ggplotly wrapper should work seamlessly, I have found that, particularly in cases where auto-sizing and margin size specification is key, ggplotly can require a great deal of added code in order to work correctly in a Shiny context.

In summary, when considering when to start with plotly vs. when to start with ggplot, I find one question to be particularly helpful: what do I value most—visual complexity and/or customization, or interactive versatility and/or preserving wide data?

If I choose the former, then ggplot is what I need; otherwise, I go with plotly. More often than not I find that ggplot emerges victorious, but even if you disagree with me in my decision-making calculus, I think it is helpful to at least think through what your personal calculus is. This will save you time when coding, as instead of playing around with various types of viz, you can simply pose the question(s) behind your calculus and know quickly what solution best fits your problem.

Why Formattable?

The case for formattable is, in my opinion, a much easier case to make than arguing for plotly vs. ggplot. The only question worth asking when deciding on whether or not to use formattable in your app is: do I want my table to tell a quick story via added visual complexity within the same cell that contains my data, or is a reference table all I am looking for? If you chose the former, formattable is probably a good way to go. You’ll notice as well that the case for formattable is very specific–in most cases there is likely a simpler solution via the DT or kableExtra packages.

The one downside that I have encountered in dealing with formattable code is the amount of code necessary to generate even moderately complicated tables. That said, this problem is easily remedied via a quick function that we can use to kill most of the duplicative coding, as seen in the example below.

First, here is the long form version:

react_formattable <- reactive({

return(

formattable(react_port_summary_table(),

list(

"Asset Portfolio Max Worth" = formatter("span",

style = x ~ style(

display = "inline-block",

direction = "rtl",

"border-radius" = "4px",

"padding-right" = "2px",

"background-color" = csscolor("darkslategray"),

width = percent(proportion(x)),

color = csscolor(gradient(x, "red", "green"))

)),

"Asset Portfolio Latest Worth" = formatter("span",

style = x ~ style(

display = "inline-block",

direction = "rtl",

"border-radius" = "4px",

"padding-right" = "2px",

"background-color" = csscolor("darkslategray"),

width = percent(proportion(x)),

color = csscolor(gradient(x, "red", "green"))

)),

"Asset Portfolio Absolute Profit" = formatter("span",

style = x ~ style(

display = "inline-block",

direction = "rtl",

"border-radius" = "4px",

"padding-right" = "2px",

"background-color" = csscolor("darkslategray"),

width = percent(proportion(x)),

color = csscolor(gradient(x, "red", "green"))

)),

"Asset Portfolio Rate of Return" = formatter("span",

style = x ~ style(

display = "inline-block",

direction = "rtl",

"border-radius" = "4px",

"padding-right" = "2px",

"background-color" = csscolor("darkslategray"),

width = percent(proportion(x)),

color = csscolor(gradient(x, "red", "green"))

))

)

)

)

})

This code can easily be shortened via the integration of a custom function, as shown below.

simple_formatter <- function(){

formatter("span",

style = x ~ style(

display = "inline-block",

direction = "rtl",

"border-radius" = "4px",

"padding-right" = "2px",

"background-color" = csscolor("darkslategray"),

width = percent(proportion(x)),

color = csscolor(gradient(x, "red", "green"))

))

}

react_formattable <- reactive({

return(

formattable(react_port_summary_table(),

list(

"Asset Portfolio Max Worth" = simple_formatter(),

"Asset Portfolio Latest Worth" = simple_formatter(),

"Asset Portfolio Absolute Profit" = simple_formatter(),

"Asset Portfolio Rate of Return" = simple_formatter()

)

)

)

})

As can be seen, formattable allows for a great deal of added complexity in crafting your table—complexity that may not be suited for all apps. That said, if you do want to quickly draw a user’s attention to something in a table, formattable is a great solution, and most of the details of the code can be greatly simplified via a function, as shown.

Conclusions:

That was a lot—I know—but I hope that from this commentary and my exemplar of the Asset Comparison Tool more generally has helped to inform your understanding of how dashboards can serve as a helpful analytic tool. Furthermore, I hope to have prompted some thoughts as to the best practices to be followed when building such a tool. I’ll end with a quick tl;dr:

- Shave complexity wherever possible, and make code as simple as possible by keeping the code for the app’s UI and inner mechanism (inputs, reactives, etc.) separate from the code for the analytic functions and visualizations.

- Build with the most extreme cases in mind (think of how your most edge-case user might use the app, and ensure that behavior won’t break the app)

- Document, document, and then document some more. Make your README both a story and a manual.

- Give Shiny Dashboard a shot if you want an easy-to-construct UI over which you don’t need complete control when it comes to visual design.

- Pick your visualization packages based on what you want to prioritize for your user, not the other way around (this applies to ggplot, plotly, formattable, etc.).

Thanks for reading!

A New Package (hhi) for Quick Calculation of Herfindahl-Hirschman Index scores

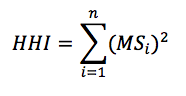

The Herfindahl-Hirschman Index (HHI) is a widely used measure of concentration in a variety of fields including, business, economics, political science, finance, and many others. Though simple to calculate (summed squared market shares of firms/actors in a single market/space), calculation of the HHI can get onerous, especially as the number of firms/actors increases and the time period grows. Thus, I decided to write a package aimed at streamlining and simplifying calculation of HHI scores. The package, hhi, calculates the concentration of a market/space based on a supplied vector of values corresponding with shares of all individual firms/actors acting in that space. The package is available on CRAN.

where MS is the market share of each firm, i, operating in a single market. Summing across all squared market shares for all firms results in the measure of concentration in the given market, HHI.

where MS is the market share of each firm, i, operating in a single market. Summing across all squared market shares for all firms results in the measure of concentration in the given market, HHI.

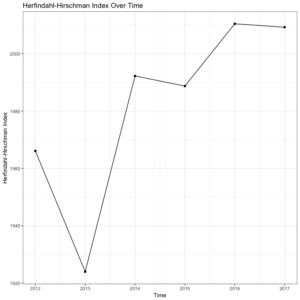

Often, the HHI is used as a measure of competition, with 10,000 equaling perfect monopoly (100^2) and 0.0 equaling perfect competition. As such, we can see that the U.S. men’s footwear industry in 2017 seems relatively competitive. Yet, to say anything substantive about the men’s U.S. footwear market, we really need a comparison of HHI scores for this market over time. This is where the second command comes in.

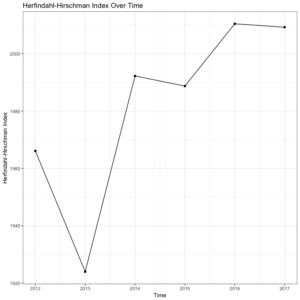

Let’s return to our men’s U.S. footwear example to see how the function works in practice. First, we need to calculate the HHI scores for each year in the data file (2012-2017), and store those as objects to make a data frame of HHI scores corresponding to individual years. Then, we simply call the plot_hhi command and generate a simple, pleasing plot of HHI scores over time. This will give us a much better sense of how our 2017 HHI score above compares with other years in this market. See the code below, followed by the output.

These lines of code will give us the following plot of HHI scores for each year in the data set.

Interestingly, the men’s U.S. footwear industry seems to be getting slightly less competitive (higher HHI scores) from 2012 to 2017, on average. To say anything substantive about this trend, though, would obviously require more sophisticated methods as well as a longer time series. Yet, the value of the hhi package is allowing for quick calculation and visualization of HHI scores over time. You can download the package from CRAN or directly from the package installation context in RStudio. And as always, if you have any questions or find any bugs requiring fixing, please feel free to contact me.

Herfindahl, Orris C. 1950. “Concentration in the steel industry.” Ph.D. dissertation, Columbia University.

Hirschman, Albert O. 1945. “National power and structure of foreign trade.” Berkeley, CA: University of California Press.

Rhoades, Stephen A. 1993. “The herfindahl-hirschman index.” Federal Reserve Bulletin 79: 188.

where MS is the market share of each firm, i, operating in a single market. Summing across all squared market shares for all firms results in the measure of concentration in the given market, HHI.

where MS is the market share of each firm, i, operating in a single market. Summing across all squared market shares for all firms results in the measure of concentration in the given market, HHI.

# First, install the "hhi" package, then load the library

install.packages("hhi")

library(hhi)

# Next, read in data: US Men's Footwear Company Market Shares, 2012-2017

footwear = read.table(".../footwear.txt")

# Now, call the "hhi" command to calculate HHI for 2017

hhi(footwear, "ms.2017") # first the df, then the shares variable in quotes

Often, the HHI is used as a measure of competition, with 10,000 equaling perfect monopoly (100^2) and 0.0 equaling perfect competition. As such, we can see that the U.S. men’s footwear industry in 2017 seems relatively competitive. Yet, to say anything substantive about the men’s U.S. footwear market, we really need a comparison of HHI scores for this market over time. This is where the second command comes in.

Let’s return to our men’s U.S. footwear example to see how the function works in practice. First, we need to calculate the HHI scores for each year in the data file (2012-2017), and store those as objects to make a data frame of HHI scores corresponding to individual years. Then, we simply call the plot_hhi command and generate a simple, pleasing plot of HHI scores over time. This will give us a much better sense of how our 2017 HHI score above compares with other years in this market. See the code below, followed by the output.

# First, calculate and store HHI for each year in the data file (2012-2017) hhi.12 = hhi(footwear, "ms.2012") hhi.13 = hhi(footwear, "ms.2013") hhi.14 = hhi(footwear, "ms.2014") hhi.15 = hhi(footwear, "ms.2015") hhi.16 = hhi(footwear, "ms.2016") hhi.17 = hhi(footwear, "ms.2017") # Combine and create df for plotting hhi = rbind(hhi.12, hhi.13, hhi.14, hhi.15, hhi.16, hhi.17) year = c(2012, 2013, 2014, 2015, 2016, 2017) hhi.data = data.frame(year, hhi) # Finally, generate HHI time series plot using the "plot_hhi" command plot_hhi(hhi.data, "year", "hhi")

These lines of code will give us the following plot of HHI scores for each year in the data set.

Interestingly, the men’s U.S. footwear industry seems to be getting slightly less competitive (higher HHI scores) from 2012 to 2017, on average. To say anything substantive about this trend, though, would obviously require more sophisticated methods as well as a longer time series. Yet, the value of the hhi package is allowing for quick calculation and visualization of HHI scores over time. You can download the package from CRAN or directly from the package installation context in RStudio. And as always, if you have any questions or find any bugs requiring fixing, please feel free to contact me.

Herfindahl, Orris C. 1950. “Concentration in the steel industry.” Ph.D. dissertation, Columbia University.

Hirschman, Albert O. 1945. “National power and structure of foreign trade.” Berkeley, CA: University of California Press.

Rhoades, Stephen A. 1993. “The herfindahl-hirschman index.” Federal Reserve Bulletin 79: 188.

Introducing purging: An R package for addressing mediation effects

A Simple Method for Purging Mediation Effects among Independent Variables

Mediation can occur when one independent variable swamps the effect of another, suggesting high correlation between the two variables. Though there are some great packages for mediation analysis out there, the simple intuition of its need is often ambiguous, especially for younger graduate students. Thus, in this blog post, it is my goal to introduce an intuitive overview of mediation and offer a simple method for “purging” variables of mediation effects for their simultaneous use in multivariate analysis. The purging process detailed in this blog is available in my recently released R package, purging, which is available on CRAN or at my GitHub.

Let’s consider a couple practical examples from “real life” research contexts. First, suppose we are interested in whether committee membership relating to a specific issue domain influences the likelihood of sponsoring related issue-specific legislation. However, in the American context as representational responsibilities permeate legislative behavior, district characteristics in similar employment-related industries likely influence self-selection onto the issue-specific committees in the first place, which we also suggest should influence likelihood of related-issue bill sponsorship. Therefore, in this context, we have a mediation model, where employment/industry (indirect) -> committee membership (direct) -> sponsorship. Thus, we would want to purge committee membership of the effects of employment/industry in the district to observe the “pure” effect of committee membership on the likelihood of related sponsorship. This example is from my paper recently published in American Politics Research.

Or consider a second example in a different realm. Let’s say we had a model where women’s level of labor force participation determines their level of contraceptive use, and that the effect of female labor force participation on fertility is indirect, essentially filtered through its impact on contraceptive use. Once we control for contraceptive use, the direct effect of labor force participation may go away. In other words, the effect of labor force participation on fertility is likely indirect, and filtered through contraceptive use, which means the variables are also highly correlated. This second example was borrowed from Scott Basinger’s and Patrick Shea’s (University of Houston) graduate statistics labs, which originally gave me the idea of expanding this out to develop an R package dedicated to addressing this issue in a variety of contexts and for several functional forms.

These two examples offer simple ways of thinking about mediation effects (e.g., labor force (indirect) -> contraception (direct) -> fertility). If we run into this problem, a simple solution is “purging”. The steps to purge are to, first, regress the direct variable (in the second case, “contraceptive use”) on the indirect variable (in the second case, “labor force participation”). Then, store those residuals, which is the direct effect of contraception after accounting for the indirect effect of labor force participation. Then, we add the stored residuals as their own “purged variable” in the updated specification. Essentially, this purging process allows for a new direct variable that is uncorrelated with the indirect variable. When we do this, we will see that each variable is explaining unique variance in the DV of interest (you can double check this several ways, such as comparing correlation coefficients (which we will do below) or by comparing R^2 across specifications).

An Applied Example

With the intuition behind mediation and the purging solution in mind, let’s walk through a simple example using some fake data. For an example based on the second case described above using real data from the United Nations Human Development Programme, see the code file, purging example.R, at my GitHub repository.

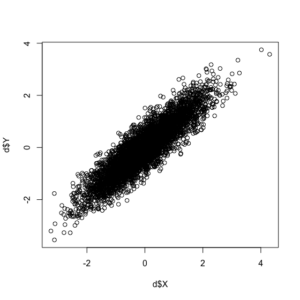

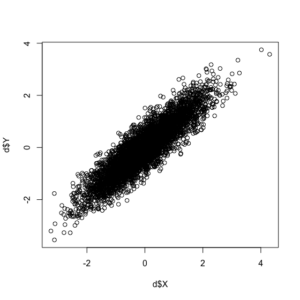

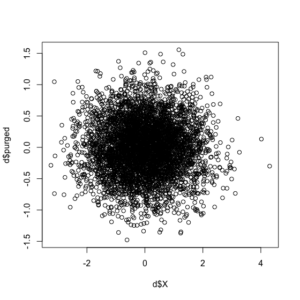

In addition to the correlation coefficient between the two variables being exactly as we specified (0.90), see this positive correlation between the two random variables in the plot below.

Now, with our correlated data created, we can call the “purge.lm” command, given that our data are continuous. Note: the package supports a variety of functional forms for continuous (linear), binary (logit and probit), and event count data (Poisson and negative binomial).

The idea behind the package is to generate the new direct-impact variable to be used in the analysis, purged of the effects of the indirect variable. To do so, simply input the name of the data frame first, followed by the name of the direct variable in quotes, and then the indirect variable also in quotes in the function. Calling the function will generate a new object (i.e., the direct variable), which can then be added to a data frame using the $ operator, with the following line of code:

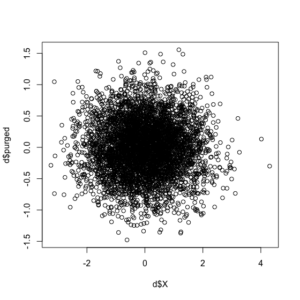

Note the correlation between the original indirect (X) variable and the new direct (Y) variable, purged of the effects of X, is -9.211365e-17, or essentially non-existent. For additional corroboration, let’s see the updated correlation plot between the X and purged-Y variables.

The purge command did as expected, with the correlation between the two variables essentially gone. You can download the package and documentation at CRAN. If you have any questions or find any bugs requiring fixing, please feel free to contact me. As this procedure was first developed and implemented (using the binary/logit iteration discussed above in the first example) in a now-published paper, please cite use of the package as: Waggoner, Philip D. 2018. “Do Constituents Influence Issue-Specific Bill Sponsorship?” American Politics Research, <https://doi.org/10.1177/1532673X18759644>

As a final note, once the intuition is mastered, be sure to check out the great work on mediation from many folks, including Kosuke Imai (Princeton), Luke Keele (Georgetown), and several others. See Imai’s mediation site as a sound starting place with code, papers, and more.

Thanks and enjoy!

Mediation can occur when one independent variable swamps the effect of another, suggesting high correlation between the two variables. Though there are some great packages for mediation analysis out there, the simple intuition of its need is often ambiguous, especially for younger graduate students. Thus, in this blog post, it is my goal to introduce an intuitive overview of mediation and offer a simple method for “purging” variables of mediation effects for their simultaneous use in multivariate analysis. The purging process detailed in this blog is available in my recently released R package, purging, which is available on CRAN or at my GitHub.

Let’s consider a couple practical examples from “real life” research contexts. First, suppose we are interested in whether committee membership relating to a specific issue domain influences the likelihood of sponsoring related issue-specific legislation. However, in the American context as representational responsibilities permeate legislative behavior, district characteristics in similar employment-related industries likely influence self-selection onto the issue-specific committees in the first place, which we also suggest should influence likelihood of related-issue bill sponsorship. Therefore, in this context, we have a mediation model, where employment/industry (indirect) -> committee membership (direct) -> sponsorship. Thus, we would want to purge committee membership of the effects of employment/industry in the district to observe the “pure” effect of committee membership on the likelihood of related sponsorship. This example is from my paper recently published in American Politics Research.

Or consider a second example in a different realm. Let’s say we had a model where women’s level of labor force participation determines their level of contraceptive use, and that the effect of female labor force participation on fertility is indirect, essentially filtered through its impact on contraceptive use. Once we control for contraceptive use, the direct effect of labor force participation may go away. In other words, the effect of labor force participation on fertility is likely indirect, and filtered through contraceptive use, which means the variables are also highly correlated. This second example was borrowed from Scott Basinger’s and Patrick Shea’s (University of Houston) graduate statistics labs, which originally gave me the idea of expanding this out to develop an R package dedicated to addressing this issue in a variety of contexts and for several functional forms.

These two examples offer simple ways of thinking about mediation effects (e.g., labor force (indirect) -> contraception (direct) -> fertility). If we run into this problem, a simple solution is “purging”. The steps to purge are to, first, regress the direct variable (in the second case, “contraceptive use”) on the indirect variable (in the second case, “labor force participation”). Then, store those residuals, which is the direct effect of contraception after accounting for the indirect effect of labor force participation. Then, we add the stored residuals as their own “purged variable” in the updated specification. Essentially, this purging process allows for a new direct variable that is uncorrelated with the indirect variable. When we do this, we will see that each variable is explaining unique variance in the DV of interest (you can double check this several ways, such as comparing correlation coefficients (which we will do below) or by comparing R^2 across specifications).

An Applied Example

With the intuition behind mediation and the purging solution in mind, let’s walk through a simple example using some fake data. For an example based on the second case described above using real data from the United Nations Human Development Programme, see the code file, purging example.R, at my GitHub repository.

# First, install the MASS package for the "mvrnorm" function

install.packages("MASS")

library(MASS)

# Second, install the purging package directly from CRAN for the "purge.lm" function

install.packages("purging")

library(purging)

# Set some paramters to guide our simulation

n = 5000

rho = 0.9

# Create some fake data

d = mvrnorm(n = n, mu = c(0, 0), Sigma = matrix(c(1, rho, rho, 1), nrow = 2), empirical = TRUE)

# Store each correlated variable as its own object

X = d[, 1]

Y = d[, 2]

# Create a dataframe of your two variables

d = data.frame(X, Y)

# Verify the correlation between these two normally distributed variables is what we set (rho = 0.9)

cor(d$X, d$Y)

plot(d$X, d$Y)

In addition to the correlation coefficient between the two variables being exactly as we specified (0.90), see this positive correlation between the two random variables in the plot below.

Now, with our correlated data created, we can call the “purge.lm” command, given that our data are continuous. Note: the package supports a variety of functional forms for continuous (linear), binary (logit and probit), and event count data (Poisson and negative binomial).

The idea behind the package is to generate the new direct-impact variable to be used in the analysis, purged of the effects of the indirect variable. To do so, simply input the name of the data frame first, followed by the name of the direct variable in quotes, and then the indirect variable also in quotes in the function. Calling the function will generate a new object (i.e., the direct variable), which can then be added to a data frame using the $ operator, with the following line of code:

df$purged.var <- purged.varLet’s now see the purge command in action using our fake data.

# Purge the "direct" variable, Y, of the mediation effects of X # (direct/indirect selection will depend on your model specification) purge.lm(d, "Y", "X") # df, "direct", "indirect" # You will get an automatic suggestion message to store the values in the df purged = purge.lm(d, "Y", "X") # Store as its own object d$purged = purged # Attach to df # Finally, check the correlation and the plot to see the effects purged from the original "Y" (direct) variable cor(d$X, d$purged) plot(d$X, d$purged)

Note the correlation between the original indirect (X) variable and the new direct (Y) variable, purged of the effects of X, is -9.211365e-17, or essentially non-existent. For additional corroboration, let’s see the updated correlation plot between the X and purged-Y variables.

The purge command did as expected, with the correlation between the two variables essentially gone. You can download the package and documentation at CRAN. If you have any questions or find any bugs requiring fixing, please feel free to contact me. As this procedure was first developed and implemented (using the binary/logit iteration discussed above in the first example) in a now-published paper, please cite use of the package as: Waggoner, Philip D. 2018. “Do Constituents Influence Issue-Specific Bill Sponsorship?” American Politics Research, <https://doi.org/10.1177/1532673X18759644>

As a final note, once the intuition is mastered, be sure to check out the great work on mediation from many folks, including Kosuke Imai (Princeton), Luke Keele (Georgetown), and several others. See Imai’s mediation site as a sound starting place with code, papers, and more.

Thanks and enjoy!

Discriminant Analysis: Statistics All The Way

Discriminant analysis is used when the variable to be predicted is categorical in nature. This analysis requires that the way to define data points to the respective categories is known which makes it different from cluster analysis where the classification criteria is not know. It works by calculating a score based on all the predictor variables and based on the values of the score, a corresponding class is selected. Hence, the name discriminant analysis which, in simple terms, discriminates data points and classifies them into classes or categories based on analysis of the predictor variables. This article delves into the linear discriminant analysis function in R and delivers in-depth explanation of the process and concepts. Before we move further, let us look at the assumptions of discriminant analysis which are quite similar to MANOVA.

(x−μ1)TΣ1−1(x−μ1)+ln|Σ1|−(x−μ2)TΣ2−1(x−μ2)−ln|Σ2|T

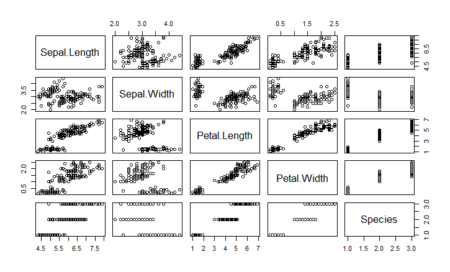

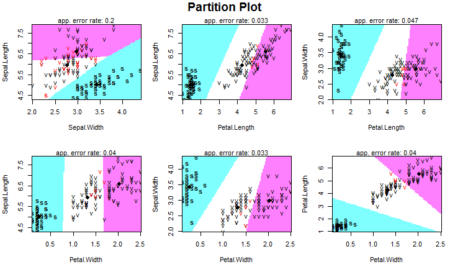

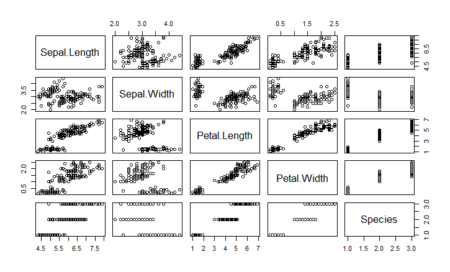

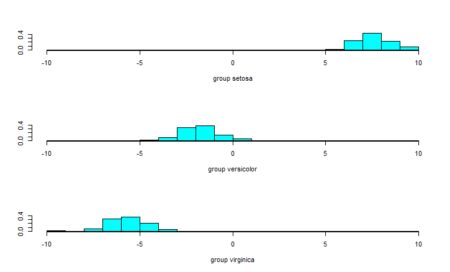

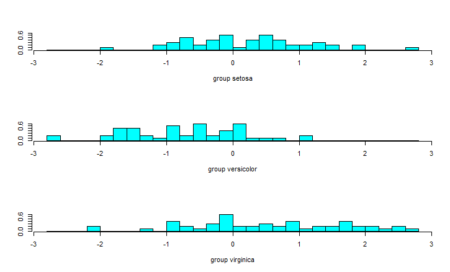

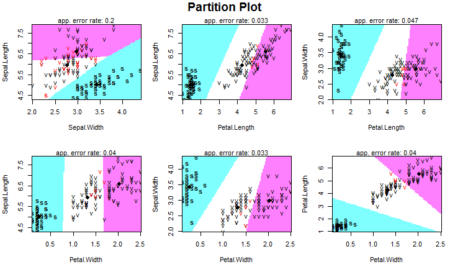

As we can see, one of the classes is completely separate while the other two are somewhat overlapping. However, LDA is still able to distinguish between the two. A better version of using lda is lda() with CV. This can be done by passing the CV=TRUE in the lda function.