We typically think of English and related spelling systems as mapping orthographic units or

graphemes onto units of speech sounds, or

phonemes. For instance, each of the three letters in “pen” maps to the three phonemes /p/, /ɛ/, and /n/ in the spoken version of the word. But there is considerable flexibility in the English spelling system, enabling other information to be encoded while still preserving phonemic mapping. For example, padding the ends of disyllabic words with extra unpronounced letters indicates that accent or stress should be placed on the second syllabic instead of the more common English pattern of first syllable

stress(e.g., compare “trusty” with “trustee”, “gravel” with “gazelle”, or “rivet” with “roulette).

Proper names provide a rich resource for exploring how spelling systems are used to convey more than sound. Consider “Gerry” and “Gerrie” for example. These names are pronounced the same, but the final vowel /i/ is spelled differently. The difference is associated with gender: Between 1880 and 2016, 99% of children named “Gerrie” have been girls compared with 32% of children named “Gerry.” More generally, as documented in the code below, name-final “ie” is more associated with girls than boys. On average, names ending in “ie” and “y” are given to girls 84% and 66% of the time respectively (i.e., names ending in the sound /i/ tend to be given to girls, but more so if spelled with “ie” than “y”).

#Data frame I created from US Census dataset of baby names

load("/Users/MHK/R/Baby Names/NamesOverall.RData")

sumNames$final_y_ie <- grepl("y$|ie$",sumNames$Name)

final_y_ie <- filter(sumNames, final_y_ie==TRUE)

final_y_ie$prop_f <- final_y_ie$femaleTotal/final_y_ie$allTotal

t.test(final_y_ie$prop_f[grepl("ie$",final_y_ie$Name)],final_y_ie$prop_f[grepl("y$",final_y_ie$Name)])

Welch Two Sample t-test

t = 20.624, df = 7024.5, p-value < 2.2e-16

alternative hypothesis: true difference in means is not equal to 0

95 percent confidence interval:

0.1684361 0.2038195

sample estimates:

mean of x mean of y

0.8412466 0.6551188

Capitalizing the first letter in proper nouns is perhaps the most well-known example of how we use the flexibility in spelling systems to convey information beyond pronunciation. More subtly, we sometimes increase the prominence of proper names by padding with extra unpronounced letters as in “Penn” versus “pen” and “Kidd” versus “kid.” An interesting question is what factors influence whether or not a name is padded. Which brings us to

Schitt’s Creek. The title of the popular series plays exactly on the fact that padding the name with extra letters that don’t affect pronunciation hides the expletive. This suggests a hypothesis: Padded names should be more common when the unpadded version contains negative sentiment, which might carry over via psychological

“contagion” from the surname to the person. So, surnames like “Grimm” and “Sadd” should be more common than surnames like “Winn.”

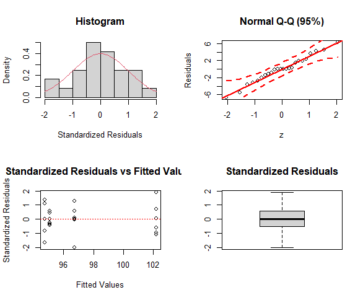

I tested this hypothesis using a data set of surnames occurring at least 100 times in the

2010 US Census. Specifically, I flagged all monosyllabic names that ended in double letters. I restricted to monosyllabic names since letter doubling can affect accent placement, as noted above, which could create differences between the padded and unpadded versions. Next, I stripped off the final letter from these names and matched to a sentiment dictionary. Finally, I tested whether surnames were more likely to be padded if the unpadded version expressed negative sentiment.

The following R code walks through these steps. We’ll start by first reading the downloaded csv file of surnames into a data frame, and then converting the surnames from upper case in the Census file to lower case for mapping to a sentiment dictionary:

library(tidyverse)

surnames <- read.csv("/Users/mike/R/Names/Names_2010Census.csv",header=TRUE)

surnames$name <- tolower(surnames$name)

Next, we’ll flag all monosyllabic names using the nsyllable function in the Quanteda package, identify those with a final double letter, and place into a new data frame:

Library(quanteda)

surnames$num_syllables <- nsyllable(surnames$name)

surnames$finalDouble <- grepl("(.)\\1$", surnames$name)

oneSylFinalDouble <- filter(surnames, num_syllables == 1 & finalDouble == TRUE)

oneSylFinalDouble <- select(oneSylFinalDouble, name, num_syllables, finalDouble)

Finally, we’ll create a variable that strips each name of its final letter (e.g., “grimm” becomes “grim”) and match the latter to a sentiment dictionary,

VADER (“Valence

Aware

Dictionary

for sEntiment Reasoning”) specifically. We’ll then put the matching words into a new data frame:

oneSylFinalDouble$Stripped <- substr(oneSylFinalDouble$name,1,nchar(oneSylFinalDouble$name)-1)

vader <- read.csv("/Users/MHK/R/Lexicon/vader_sentiment_words.csv",header=TRUE)

vader$word <- as.character(vader$word)

vader <- select(vader,word,mean_sent)

oneSylFinalDouble <- left_join(oneSylFinalDouble, vader, by=c("Stripped"="word"))

sentDouble <- filter(oneSylFinalDouble, !(is.na(mean_sent)))

The final set of surnames is small – just 36 cases after removing one duplicate. It’s a small enough dataset to list them all:

select(sentDouble,name, mean_sent) %>% arrange(mean_sent)

name mean_sent

1 warr -2.9

2 cruell -2.8

3 stabb -2.8

4 grimm -2.7

5 robb -2.6

6 sinn -2.6

7 bann -2.6

8 threatt -2.4

9 hurtt -2.4

10 grieff -2.2

11 fagg -2.1

12 sadd -2.1

13 glumm -2.1

14 liess -1.8

15 crapp -1.6

16 nagg -1.5

17 gunn -1.4

18 trapp -1.3

19 stopp -1.2

20 dropp -1.1

21 cutt -1.1

22 dragg -0.9

23 rigg -0.5

24 wagg -0.2

25 stoutt 0.7

26 topp 0.8

27 fann 1.3

28 fitt 1.5

29 smartt 1.7

30 yess 1.7

31 gladd 2.0

32 hugg 2.1

33 funn 2.3

34 wonn 2.7

35 winn 2.8

36 loll 2.9

Of these 36 cases, 24 have negative sentiment when the final letter is removed and only 12 positive sentiment, a significant skew toward padding surnames that would express negative sentiment if unpadded as determined through a one-tailed binomial test:

binom.test(24,36, alternative=c("greater"))

Exact binomial test

data: 24 and 36

number of successes = 24, number of trials = 36, p-value = 0.03262

alternative hypothesis: true probability of success is greater than 0.5

95 percent confidence interval:

0.516585 1.000000

sample estimates:

probability of success

0.6666667

An alternative explanation for this pattern is that there are more surnames with negative than positive sentiment overall, providing greater opportunity for negative surnames to be padded with extra letters. However, if anything, there are slightly more surnames with positive than negative sentiment in the Census database (294 vs. 254).

In sum, US surnames are more likely to be padded with extra letters when the unpadded version would express negative rather than positive sentiment. These results align with

other naming patterns that indicate an aversion toward negative sentiment. Such aversions are consistent with

nominal realism or the cross-cultural tendency to transfer connotations from a name to the named.

Finally, in case you’re wondering, no—“Schitt,” “Shitt,” nor “Sh*t” appear in the US Census database of surnames (at least in 2010). However, “Dicke,” “Asse,” and “Paine” do appear, illustrating another way to pad proper names besides letter doubling: Adding a final unpronounced “e.” But that’s a tale for another blog….